Sep 30, 2024

Debugging the usual censorshit issues, finally got sick of looking at normal

tcpdump output, and decided to pipe it through a simple translator/colorizer script.

I think it's one of these cases where a picture is worth a thousand words:

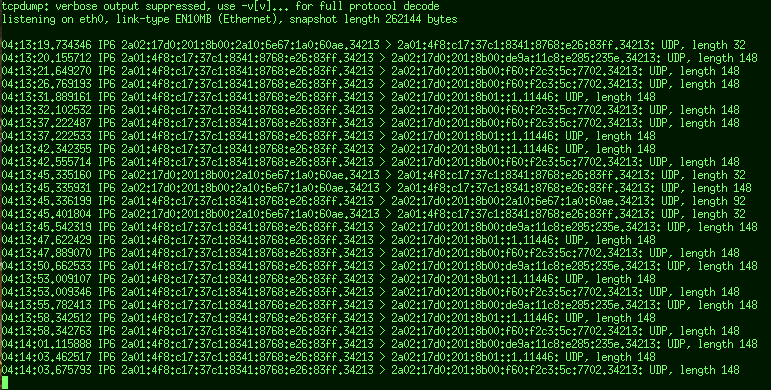

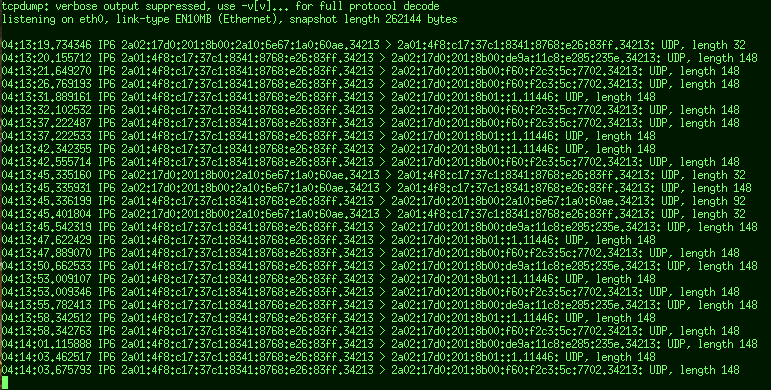

This is very hard to read, especially when it's scrolling,

with long generated IPv6'es in there.

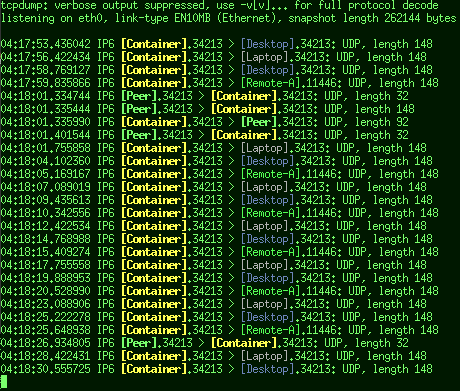

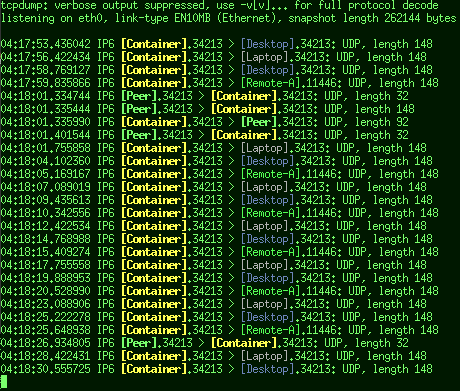

While this IMO is quite readable:

Immediately obvious who's talking to whom and when, where it's especially

trivial to focus on packets from specific hosts by their name shape/color.

Difference between the two is this trivial config file:

2a01:4f8:c17:37c1: local.net: !gray

2a01:4f8:c17:37c1:8341:8768:e26:83ff [Container] !bo-ye

2a02:17d0:201:8b0 remote.net !gr

2a02:17d0:201:8b01::1 [Remote-A] !br-gn

2a02:17d0:201:8b00:2a10:6e67:1a0:60ae [Peer] !bold-cyan

2a02:17d0:201:8b00:f60:f2c3:5c:7702 [Desktop] !blue

2a02:17d0:201:8b00:de9a:11c8:e285:235e [Laptop] !wh

...which sets host/network/prefix labels to replace unreadable address parts

with (hosts in brackets as a convention) and colors/highlighting for those

(using either full or two-letter DIN 47100-like names for brevity).

Plus the script to pipe that boring tcpdump output through - tcpdump-translate.

Another useful feature of such script turns out to be filtering -

tcpdump command-line quickly gets unwieldy with "host ... && ..." specs,

while in the config above it's trivial to comment/uncomment lines and filter

by whatever network prefixes, instead of cramming it all into shell prompt.

tcpdump has some of this functionality via DNS reverse-lookups too,

but I really don't want it resolving any addrs that I don't care to track specifically,

which often makes output even more confusing, with long and misleading internal names

assigned by someone else for their own purposes popping up in wrong places, while still

remaining indistinct and lacking colors.

Aug 06, 2024

For TCP connections, it seems pretty trivial - old netstat (from net-tools project)

and modern ss (iproute2) tools do it fine, where you can easily grep both listening

or connected end by IP:port they're using.

But ss -xp for unix sockets (AF_UNIX, aka "named pipes") doesn't work like

that - only prints socket path for listening end of the connection, which makes

lookups by socket path not helpful, at least with the current iproute-6.10.

"at least with the current iproute" because manpage actually suggests this:

ss -x src /tmp/.X11-unix/*

Find all local processes connected to X server.

Where socket is wrong for modern X - easy to fix - and -p option seem to be

omitted (to show actual processes), but the result is also not at all "local

processes connected to X server" anyway:

# ss -xp src @/tmp/.X11-unix/X1

Netid State Recv-Q Send-Q Local Address:Port Peer Address:Port Process

u_str ESTAB 0 0 @/tmp/.X11-unix/X1 26800 * 25948 users:(("Xorg",pid=1519,fd=51))

u_str ESTAB 0 0 @/tmp/.X11-unix/X1 331064 * 332076 users:(("Xorg",pid=1519,fd=40))

u_str ESTAB 0 0 @/tmp/.X11-unix/X1 155940 * 149392 users:(("Xorg",pid=1519,fd=46))

...

u_str ESTAB 0 0 @/tmp/.X11-unix/X1 16326 * 20803 users:(("Xorg",pid=1519,fd=44))

u_str ESTAB 0 0 @/tmp/.X11-unix/X1 11106 * 27720 users:(("Xorg",pid=1519,fd=50))

u_str LISTEN 0 4096 @/tmp/.X11-unix/X1 12782 * 0 users:(("Xorg",pid=1519,fd=7))

It's just a long table listing same "Xorg" process on every line,

which obviously isn't what example claims to fetch, or useful in any way.

So maybe it worked fine earlier, but some changes to the tool or whatever

data it grabs made this example obsolete and not work anymore.

But there are "ports" listed for unix sockets, which I think correspond to

"inodes" in /proc/net/unix, and are global across host (or at least same netns),

so two sides of connection - that socket-path + Xorg process info - and other

end with connected process info - can be joined together by those port/inode numbers.

I haven't been able to find a tool to do that for me easily atm, so went ahead to

write my own script, mostly focused on listing per-socket pids on either end, e.g.:

# unix-socket-links

...

/run/dbus/system_bus_socket :: dbus-broker[1190] :: Xorg[1519] bluetoothd[1193]

claws-mail[2203] dbus-broker-lau[1183] efreetd[1542] emacs[2160] enlightenment[1520]

pulseaudio[1523] systemd-logind[1201] systemd-network[1363] systemd-timesyn[966]

systemd[1366] systemd[1405] systemd[1] waterfox[2173]

...

/run/user/1000/bus :: dbus-broker[1526] :: dbus-broker-lau[1518] emacs[2160] enlightenment[1520]

notification-th[1530] pulseaudio[1523] python3[1531] python3[5397] systemd[1405] waterfox[2173]

/run/user/1000/pulse/native :: pulseaudio[1523] :: claws-mail[2203] emacs[2160]

enlightenment[1520] mpv[9115] notification-th[1530] python3[2063] waterfox[2173]

@/tmp/.X11-unix/X1 :: Xorg[1519] :: claws-mail[2203] conky[1666] conky[1671] emacs[2160]

enlightenment[1520] notification-th[1530] python3[5397] redshift[1669] waterfox[2173]

xdpms[7800] xterm[1843] xterm[2049] yeahconsole[2047]

...

Output format is <socket-path> :: <listening-pid> :: <clients...>, where it's

trivial to see exactly what is connected to which socket (and what's listening there).

unix-socket-links @/tmp/.X11-unix/X1 can list only conns/pids for that

socket, and adding -c/--conns can be used to disaggregate that list of

processes back into specific connections (which can be shared between pids too),

to get more like a regular netstat/ss output, but with procs on both ends,

not weirdly broken one like ss -xp gives you.

Script is in the usual mk-fg/fgtk repo (also on codeberg and local git),

with code link and a small doc here:

https://github.com/mk-fg/fgtk?tab=readme-ov-file#hdr-unix-socket-links

Was half-suspecting that I might need to parse /proc/net/unix or load eBPF

for this, but nope, ss has all the info needed, just presents it in a silly way.

Also, unlike some other iproute2 tools where that was added (or lsfd below), it

doesn't have --json output flag, but should be stable enough to parse

anyway, I think, and easy enough to sanity-check by the header.

Oh, and also, one might be tempted to use lsof or lsfd for this, like I did,

but it's more complicated and can be janky to get the right output out of these,

and pretty sure lsof even has side-effects, where it connects to socket with +E

(good luck figuring out what's that supposed to do btw), causing all sorts of

unintended mayhem, but here are snippets that I've used for those in some past

(checking where stalled ssh-agent socket connections are from in this example):

lsof -wt +E "$SSH_AUTH_SOCK" | awk '{print "\\<" $1 "\\>"}' | g -3f- <(ps axlf)

lsfd -no PID -Q "UNIX.PATH == '$SSH_AUTH_SOCK'" | grep -f- <(ps axlf)

Don't think either of those work anymore, maybe for same reason as with ss

not listing unix socket path for egress unix connections, and lsof in particular

straight-up hangs without even kill -9 getting it, if socket on the other

end doesn't process its (silly and pointless) connection, so maybe don't use

that one at least - lsfd seem to be easier to use in general.

Jul 01, 2024

Usually zsh does fine wrt tab-completion, but sometimes you just get nothing

when pressing tab, either due to somewhat-broken completer or it working as

intended but there's seemingly being "nothing" to complete.

Recently latter started happening after redirection characters,

e.g. on cat myfile > <TAB>, and that finally prompted me to re-examine

why I even put up with this crap.

Because in vast majority of cases, completion should use files, except for

commands as the first thing on the line, and maybe some other stuff way more rarely,

almost as an exception.

But completing nothing at all seems like an obvious bug to me,

as if I wanted nothing, wouldn't have pressed the damn tab key in the first place.

One common way to work around the lack of file-completions when needed,

is to define special key for just those, like shift-tab:

zstyle ':completion:complete-files:*' completer _files

bindkey "\e[Z" complete-files

If using that becomes a habit everytime one needs files, that'd be a good solution,

but I still use generic "tab" by default, and expect file-completion from it in most cases,

so why not have it fallback to file-completion if whatever special thing zsh has

otherwise fails - i.e. suggest files/paths instead of nothing.

Looking at _complete_debug output (can be bound/used instead of tab-completion),

it's easy to find where _main_complete dispatcher picks completer script,

and that there is apparently no way to define fallback of any kind there, but easy

enough to patch one in, at least.

Here's the hack I ended up with for /etc/zsh/zshrc:

## Make completion always fallback to next completer if current returns 0 results

# This allows to fallback to _file completion properly when fancy _complete fails

# Patch requires running zsh as root at least once, to apply it (or warn/err about it)

_patch_completion_fallbacks() {

local patch= p=/usr/share/zsh/functions/Completion/Base/_main_complete

[[ "$p".orig -nt "$p" ]] && return || {

grep -Fq '## fallback-complete patch v1 ##' "$p" && touch "$p".orig && return ||:; }

[[ -n "$(whence -p patch)" ]] || {

echo >&2 'zshrc :: NOTE: missing "patch" tool to update completions-script'; return; }

read -r -d '' patch <<'EOF'

--- _main_complete 2024-06-09 01:10:28.352215256 +0500

+++ _main_complete.new 2024-06-09 01:10:51.087404762 +0500

@@ -210,18 +210,20 @@

fi

_comp_mesg=

if [[ -n "$call" ]]; then

if "${(@)argv[3,-1]}"; then

ret=0

break 2

fi

elif "$tmp"; then

+ ## fallback-complete patch v1 ##

+ [[ $compstate[nmatches] -gt 0 ]] || continue

ret=0

break 2

fi

(( _matcher_num++ ))

done

[[ -n "$_comp_mesg" ]] && break

(( _completer_num++ ))

done

EOF

patch --dry-run -stN "$p" <<< "$patch" &>/dev/null \

|| { echo >&2 "zshrc :: WARNING: zsh fallback-completions patch fails to apply"; return; }

cp -a "$p" "$p".orig && patch -stN "$p" <<< "$patch" && touch "$p".orig \

|| { echo >&2 "zshrc :: ERROR: failed to apply zsh fallback-completions patch"; return; }

echo >&2 'zshrc :: NOTE: patched zsh _main_complete routine to allow fallback-completions'

}

[[ "$UID" -ne 0 ]] || _patch_completion_fallbacks

unset _patch_completion_fallbacks

This would work with multiple completers defined like this:

zstyle ':completion:*' completer _complete _ignored _files

Where _complete _ignored is the default completer-chain, and will try

whatever zsh has for the command first, and then if those return nothing,

instead of being satisfied with that, patched-in continue will keep going

and run next completer, which is _files in this case.

A patch with generous context is to find the right place and bail if upstream

code changes, but otherwise, whenever first running the shell as root,

fix the issue until next zsh package update (and then patch will run/fix it again).

Doubt it'd make sense upstream in this form, as presumably current behavior is

locked-in over years, but an option for something like this would've been nice.

I'm content with a hack for now though, it works too.

Jan 17, 2024

Some days ago, I've randomly noticed that github stopped rendering long

rst (as in reStructuredText) README files on its repository pages.

Happened in a couple repos, with no warning or anything, it just said "document

takes too long to preview" below the list of files, with a link to view raw .rst file.

Sadly that's not the only issue with rst rendering, as codeberg (and pretty

sure its Gitea / Forgejo base apps) had issues with some syntax there as well -

didn't make any repo links correctly, didn't render table of contents, missed

indented references for links, etc.

So thought to fix all that by converting these few long .rst READMEs to .md (markdown),

which does indeed fix all issues above, as it's a much more popular format nowadays,

and apparently well-tested and working fine in at least those git-forges.

One nice thing about rst however, is that it has one specification and a

reference implementation of tools to parse/generate its syntax -

python docutils - which can be used to go over .rst file in a strict

manner and point out all syntax errors in it (rst2html does it nicely).

Good example of such errors that always gets me, is using links in the text with

a reference-style URLs for those below (instead of inlining them), to avoid

making plaintext really ugly, unreadable and hard to edit due to giant

mostly-useless URLs in middle of it.

You have to remember to put all those references in, ideally not leave any

unused ones around, and then keep them matched to tags in the text precisely,

down to every single letter, which of course doesn't really work with typing

stuff out by hand without some kind of machine-checks.

And then also, for git repo documentation specifically, all these links should

point to files in the repo properly, and those get renamed, moved and removed

often enough to be a constant problem as well.

Proper static-HTML doc-site generation tools like mkdocs (or its

popular mkdocs-material fork) do some checking for issues like that

(though confusingly not nearly enough), but require a bit of setup,

with configuration and whole venv for them, which doesn't seem very

practical for a quick README.md syntax check in every random repo.

MD linters apparently go the other way and check various garbage metrics like

whether plaintext conforms to some style, while also (confusingly!) often not

checking basic crap like whether it actually works as a markdown format.

Task itself seems ridiculously trivial - find all ... [some link] ... and

[some link]: ... bits in the file and report any mismatches between the two.

But looking at md linters a few times now, couldn't find any that do it nicely

that I can use, so ended up writing my own one - markdown-checks tool - to

detect all of the above problems with links in .md files, and some related quirks:

- link-refs - Non-inline links like "[mylink]" have exactly one "[mylink]: URL" line for each.

- link-refs-unneeded - Inline URLs like "[mylink](URL)" when "[mylink]: URL" is also in the md.

- link-anchor - Not all headers have "<a name=hdr-...>" line. See also -a/--add-anchors option.

- link-anchor-match - Mismatch between header-anchors and hashtag-links pointing to them.

- link-files - Relative links point to an existing file (relative to them).

- link-files-weird - Relative links that start with non-letter/digit/hashmark.

- link-files-git - If .md file is in a git repo, warn if linked files are not under git control.

- link-dups - Multiple same-title links with URLs.

- ... - and probably a couple more by now

- rx-in-code - Command-line-specified regexp (if any) detected inside code block(s).

- tabs - Make sure md file contains no tab characters.

- syntax - Any kind of incorrect syntax, e.g. blocks opened and not closed and such.

ReST also has a nice .. contents:: feature that automatically renders Table of Contents

from all document headers, quite like mkdocs does for its sidebars, but afaik basic

markdown does not have that, and maintaining that thing with all-working links manually,

without any kind of validation, is pretty much impossible in particular,

and yet absolutely required for large enough documents with a non-autogenerated ToC.

So one interesting extra thing that I found needing to implement there was for script

to automatically (with -a/--add-anchors option) insert/update

<a name=hdr-some-section-header></a> anchor-tags before every header,

because otherwise internal links within document are impossible to maintain either -

github makes hashtag-links from headers according to its own inscrutable logic,

gitlab/codeberg do their own thing, and there's no standard for any of that

(which is a historical problem with .md in general - poor ad-hoc standards on

various features, while .rst has internal links in its spec).

Thus making/maintaining table-of-contents kinda requires stable internal links and

validating that they're all still there, and ideally that all headers have such

internal link as well, i.e. new stuff isn't missing in the ToC section at the top.

Script addresses both parts by adding/updating those anchor-tags, and having

them in the .md file itself indeed makes all internal hashtag-links "stable"

and renderer-independent - you point to a name= set within the file, not guess

at what name github or whatever platform generates in its html at the moment

(which inevitably won't match, so kinda useless that way too).

And those are easily validated as well, since both anchor and link pointing to

it are in the file, so any mismatches are detected and reported.

I was also thinking about generating the table-of-contents section itself,

same as it's done in rst, for which surely many tools exist already,

but as long as it stays correct and checked for not missing anything,

there's not much reason to bother - editing it manually allows for much greater

flexibility, and it's not long enough for that to be any significant amount

of work, either to make initially or add/remove a link there occasionally.

With all these checks for wobbly syntax bits in place, markdown READMEs

seem to be as tidy, strict and manageable as rst ones. Both formats have rough

feature parity for such simple purposes, but .md is definitely only one with

good-enough support on public code-forge sites, so a better option for public docs atm.

Jan 09, 2024

Earlier, as I was setting-up filtering for ca-certificates on a host running

a bunch of systemd-nspawn containers (similar to LXC), simplest way to handle

configuration across all those consistently seem to be just rsyncing filtered

p11-kit bundle into them, and running (distro-specific) update-ca-trust there,

to easily have same expected CA roots across them all.

But since these are mutable full-rootfs multi-app containers with init (systemd)

in them, they update their filesystems separately, and routine package updates

will overwrite cert bundles in /usr/share/, so they'd have to be rsynced again

after that happens.

Good mechanism to handle this in linux is fanotify API, which in practice is

used something like this:

# fatrace -tf 'WD+<>'

15:58:09.076427 rsyslogd(1228): W /var/log/debug.log

15:58:10.574325 emacs(2318): W /home/user/blog/content/2024-01-09.abusing-fanotify.rst

15:58:10.574391 emacs(2318): W /home/user/blog/content/2024-01-09.abusing-fanotify.rst

15:58:10.575100 emacs(2318): CW /home/user/blog/content/2024-01-09.abusing-fanotify.rst

15:58:10.576851 git(202726): W /var/cache/atop.d/atop.acct

15:58:10.893904 rsyslogd(1228): W /var/log/syslog/debug.log

15:58:26.139099 waterfox(85689): W /home/user/.waterfox/general/places.sqlite-wal

15:58:26.139347 waterfox(85689): W /home/user/.waterfox/general/places.sqlite-wal

...

Where fatrace in this case is used to report all write, delete, create and

rename-in/out events for files and directories (that weird "-f WD+<>" mask),

as it promptly does.

It's useful to see what apps might abuse SSD/NVME writes, more generally

to understand what's going on with filesystem under some load, which app

is to blame for that and where it happens, or as a debugging/monitoring tool.

But also if you want to rsync/update files after they get changed under some

dirs recursively, it's an awesome tool for that as well.

With container updates above, can monitor /var/lib/machines fs, and it'll report

when anything in <some-container>/usr/share/ca-certificates/trust-source/ gets

changed under it, which is when aforementioned rsync hook should run again for

that container/path.

To have something more robust and simpler than a hacky bash script around

fatrace, I've made run_cmd_pipe.nim tool, that reads ini config file like this,

with a list of input lines to match:

delay = 1_000 # 1s delay for any changes to settle

cooldown = 5_000 # min 5s interval between running same rule+run-group command

[ca-certs-sync]

regexp = : \S*[WD+<>]\S* +/var/lib/machines/(\w+)/usr/share/ca-certificates/trust-source(/.*)?$

regexp-env-group = 1

regexp-run-group = 1

run = ./_scripts/ca-certs-sync

And runs commands depending on regexp (PCRE) matches on whatever input gets

piped into it, passing regexp-match through into via env, with sane debouncing delays,

deduplication, config reloads, tiny mem footprint and other proper-daemon stuff.

Can also setup its pipe without shell, for an easy ExecStart=run_cmd_pipe rcp.conf

-- fatrace -cf WD+<> systemd.service configuration.

Having this running for a bit now, and bumping into other container-related

tasks, realized how it's useful for a lot of things even more generally,

especially when multiple containers need to send some changes to host.

For example, if a bunch of containers should have custom network interfaces

bridged between them (in a root netns), which e.g. systemd.nspawn Zone=

doesn't adequately handle - just add whatever custom

VirtualEthernetExtra=vx-br-containerA:vx-br into container, have a script

that sets-up those interfaces in those "touch" or create a file when it's done,

and then run host-script for that event, to handle bridging on the other side:

[vx-bridges]

regexp = : \S*W\S* +/var/lib/machines/(\w+)/var/tmp/vx\.\S+\.ready$

regexp-env-group = 1

run = ./_scripts/vx-bridges

This seem to be incredibly simple (touch/create files to pick-up as events),

very robust (as filesystems tend to be), and doesn't need to run anything more

than ~600K of fatrace + run_cmd_pipe, with a very no-brainer configuration

(which file[s] to handle by which script[s]).

Can be streamlined for any types and paths of containers themselves

(incl. LXC and OCI app-containers like docker/podman) by bind-mounting

dedicated filesystem/volume into those to pass such event-files around there,

kinda like it's done in systemd with its agent plug-ins, e.g. for handling

password inputs, so not really a novel idea either.

systemd.path units can also handle simpler non-recursive "this one file changed" events.

Alternative with such shared filesystem can be to use any other IPC mechanisms,

like append/tail file, fcntl locks, fifos or unix sockets, and tbf run_cmd_pipe.nim

can handle all those too, by running e.g. tail -F shared.log instead of fatrace,

but latter is way more convenient on the host side, and can act on incidental or

out-of-control events (like pkg-mangler doing its thing in the initial ca-certs use-case).

Won't work for containers distributed beyond single machine or more self-contained VMs -

that's where you'd probably want more complicated stuff like AMQP, MQTT, K8s and such -

but for managing one host's own service containers, regardless of whatever they run and

how they're configured, this seem to be a really neat way to do it.

Dec 28, 2023

It's no secret that Web PKI was always a terrible mess.

Idk of anything that can explain it better than Moxie Marlinspike's old

"SSL And The Future Of Athenticity" talk, which still pretty much holds up

(and is kinda hilarious), as Web PKI for TLS is still up to >150 certs,

couple of which get kicked-out after malicious misuse or gross malpractice

every now and then, and it's actually more worrying when they don't.

And as of 2023, EU eIDAS proposal stands to make this PKI much worse in the

near-future, adding whole bunch of random national authorities to everyone's

list of trusted CAs, which of course have no rational business of being there

on all levels.

(with all people/orgs on the internet seemingly in agreement on that - see e.g.

EFF, Mozilla, Ryan Hurst's excellent writeup, etc - but it'll probably pass

anyway, for whatever political reasons)

So in the spirit of at least putting some bandaid on that, I had a long-standing

idea to write a logger for all CAs that my browser uses over time, then inspect

it after a while and kick <1% CAs out of the browser at least.

This is totally doable, and not that hard - e.g. cerdicator extension can be

tweaked to log to a file instead of displaying CA info - but never got around to

doing it myself.

Update 2024-01-03: there is now also CertInfo app to scrape local history and

probe all sites there for certs, building a list of root and intermediate CAs to inspect.

But recently, scrolling through Ryan Hurst's "eIDAS 2.0 Provisional Agreement

Implications for Web Browsers and Digital Certificate Trust" open letter,

pie chart on page-3 there jumped out to me, as it showed that 99% of certs use

only 6-7 CAs - so why even bother logging those, there's a simple list of them,

which should mostly work for me too.

I remember browsers and different apps using their own CA lists being a problem

in the past, having to tweak mozilla nss database via its own tools, etc,

but by now, as it turns out, this problem seem to have been long-solved on a

typical linux, via distro-specific "ca-certificates" package/scripts and p11-kit

(or at least it appears to be solved like that on my systems).

Gist is that /usr/share/ca-certificates/trust-source/ and its /etc

counterpart have *.p11-kit CA bundles installed there by some package like

ca-certificates-mozilla, and then package-manager runs update-ca-trust,

which exports that to /etc/ssl/cert.pem and such places, where all other

tools can pickup and use same CAs.

Firefox (or at least my Waterfox build) even uses installed p11-kit bundle(s)

directly and immediately.

Those p11-kit bundles need to be altered or restricted somehow to affect

everything on the system, only needing update-ca-trust at most - neat!

One problem I bumped into however, is that p11-kit tools only support masking

specific individual CAs from the bundle via blacklist, and that will not be

future-proof wrt upstream changes to that bundle, if the goal is to "only use

these couple CAs and nothing else".

So ended up writing a simple script to go through .p11-kit bundle files and remove

everything unnecessary from them on a whitelist-bases - ca-certificates-whitelist-filter -

which uses a simple one-per-line format with wildcards to match multiple certs:

Baltimore CyberTrust Root # CloudFlare

ISRG Root X* # Let's Encrypt

GlobalSign * # Google

DigiCert *

Sectigo *

Go Daddy *

Microsoft *

USERTrust *

Picking whitelisted CAs from Ryan's list, found that GlobalSign should be added,

and that it already signs Google's GTS CA's (so latter are unnecessary), while

"Baltimore CyberTrust Root" seem to be a strange omission, as it signs CloudFlare's

CA cert, which should've been a major thing on the pie chart in that eIDAS open letter.

But otherwise, that's pretty much it, leaving a couple of top-level CAs instead

of a hundred, and couple days into it so far, everything seem to be working fine

with just those.

Occasional "missing root" error can be resolved easily by adding that root to the list,

or ignoring it for whatever irrelevant one-off pages, though this really doesn't seem

to be an issue at all.

This is definitely not a solution to Web PKI being a big pile of dung, made as

an afterthough and then abused relentlessly and intentionally, with no apparent

incentive or hope for fixes, but I think a good low-effort bandaid against clumsy

mass-MitM by whatever random crooks on the network, in ISPs and idiot governments.

It still unfortunately leaves out two large issues in particular:

CAs on the list are still terrible mismanaged orgs.

For example, Sectigo there is a renamed Comodo CA, after a series of incredible

fuckups in all aspects of their "business", and I'm sure the rest of them are

just as bad, but at least it's not a 100+ of those to multiply the risks.

Majority of signing CAs are so-called "intermediate" CAs (600+ vs 100+ roots),

which have valid signing cert itself signed by one of the roots, and these are even

more shady, operating with even less responsibility/transparency and no oversight.

Hopefully this is a smaller list with less roots as well, though ideally all

those should be whitelist-pruned exactly same as roots, which I think easiest

to do via cert chain/usage logs (from e.g. CertInfo app mentioned above),

where actual first signing cert in the chain can be seen, not just top-level ones.

But then such whitelist probably can't be enforced, as you'd need to say

"trust CAs on this list, but NOT any CAs that they signed",

which is not how most (all?) TLS implementations work ¯\_(ツ)_/¯

And a long-term problem with this approach, is that if used at any scale, it

further shifts control over CA trust from e.g. Mozilla's p11-kit bundle to those

dozen giant root CAs above, who will then realistically have to sign even more

and more powerful intermediate CAs for other orgs and groups (as they're the

only ones on the CA list), ossifying them to be in control of Web PKI in the

future over time, and makes "trusting" them meaningless non-decision (as you

can't avoid that, even as/if/when they have to sign sub-CAs for whatever shady

bad actors in secret).

To be fair, there are proposals and movements to remedy this situation, like

Certificate Transparency and various cert and TLS policies/parameters' pinning,

but I'm not hugely optimistic, and just hope that a quick fix like this might be

enough to be on the right side of "you don't need to outrun the bear, just the

other guy" metaphor.

Link: ca-certificates-whitelist-filter script on github (codeberg, local git)

Nov 17, 2023

Like probably most folks who are surrounded by tech, I have too many USB

devices plugged into the usual desktop, to the point that it kinda bothers me.

For one thing, some of those doohickeys always draw current and noticeably

heat up in the process, which can't be good on the either side of the port.

Good examples of this are WiFi dongles (with iface left in UP state), a

cheap NFC reader I have (draws 300mA idling on the table 99.99% of the time),

or anything with "battery" or "charging" in the description.

Other issue is that I don't want some devices to always be connected.

Dual-booting into gaming Windows for instance, there's nothing good that

comes from it poking at and spinning-up USB-HDDs, Yubikeys or various

connectivity dongles' firmware, as well as jerking power on-and-off on those

for reboots and whenever random apps/games probe those (yeah, not sure why either).

Unplugging stuff by hand is work, and leads to replacing usb cables/ports/devices

eventually (more work), so toggling power on/off at USB hubs seems like an easy fix.

USB Hubs sometimes support that in one of two ways - either physical switches

next to ports, or using USB Per-Port-Power-Switching (PPPS) protocol.

Problem with physical switches is that relying on yourself not to forget to do

some on/off sequence manually for devices each time doesn't work well,

and kinda silly when it can be automated - i.e. if you want to run ad-hoc AP,

let the script running hostapd turn the power on-and-off around it as well.

But sadly, at least in my experience with it, USB Hub PPPS is also a bad solution,

broken by two major issues, which are likely unfixable:

USB Hubs supporting per-port power toggling are impossible to find or identify.

Vendors don't seem to care about and don't advertise this feature anywhere,

its presence/support changes between hardware revisions (probably as a

consequence of "don't care"), and is often half-implemented and dodgy.

uhubctl project has a list of Compatible USB hubs for example, and note

how hubs there have remarks like "DUB-H7 rev D,E (black). Rev B,C,F,G not

supported" - shops and even product boxes mostly don't specify these revisions

anywhere, or even list the wrong one.

So good luck finding the right revision of one model even when you know it

works, within a brief window while it's still in stock.

And knowing which one works is pretty much only possible through testing -

same list above is full of old devices that are not on the market, and that

market seem to be too large and dynamic to track models/revisions accurately.

On top of that, sometimes hubs toggle data lines and not power (VBUS),

making feature marginally less useful for cases above, but further confusing

the matter when reading specifications or even relying on reports from users.

Pretty sure that hubs with support for this are usually higher-end

vendors/models too, so it's expensive to buy a bunch of them to see what

works, and kinda silly to overpay for even one of them anyway.

PPPS in USB Hubs has no memory and defaults to ON state.

This is almost certainly by design - when someone plugs hub without obvious

buttons, they might not care about power switching on ports, and just want it

to work, so ports have to be turned-on by default.

But that's also the opposite of what I want for all cases mentioned above -

turning on all power-hungry devices on reboot (incl. USB-HDDs that can draw

like 1A on spin-up!), all at once, in the "I'm starting up" max-power mode, is

like the worst thing such hub can do!

I.e. you disable these ports for a reason, maybe a power-related reason, which

"per-port power switching" name might even hint at, and yet here you go,

on every reboot or driver/hw/cable hiccup, this use-case gets thrown out of the

window completely, in the dumbest and most destructive way possible.

It also negates the other use-cases for the feature of course - when you

simply don't want devices to be exposed, aside from power concerns - hub does

the opposite of that and gives them all up whenever it bloody wants to.

In summary - even if controlling hub port power via PPPS USB control requests

worked, and was easy to find (which it very much is not), it's pretty much

useless anyway.

My simple solution, which I can emphatically recommend:

Grab robust USB Hub with switches next to ports, e.g. 4-port USB3 ones like

that seem to be under $10 these days.

Get a couple of <$1 direct-current solid-state relays or mosfets, one per port.

I use locally-made К293КП12АП ones, rated for toggling 0-60V 2A DC via

1.1-1.5V optocoupler input, just sandwitched together at the end - they don't

heat up at all and easy to solder wires to.

Some $3-5 microcontroller with the usual USB-TTY, like any Arduino or RP2040

(e.g. Waveshare RP2040-Zero from aliexpress).

Couple copper wires pulled from an ethernet cable for power, and M/F jumper

pin wires to easily plug into an MCU board headers.

An hour or few with a soldering iron, multimeter and a nice podcast.

Open up USB Hub - cheap one probably doesn't even have any screws - probe which

contacts switches connect in there, solder short thick-ish copper ethernet wires

from their legs to mosfets/relays, and jumper wires from input pins of the latter

to plug into a tiny rp2040/arduino control board on the other end.

I like SSRs instead of mosfets here to not worry about controller and hub

being plugged into same power supply that way, and they're cheap and foolproof -

pretty much can't connect them disastorously wrong, as they've diodes on both

circuits. Optocoupler LED in such relays needs one 360R resistor on shared GND

of control pins to drop 5V -> 1.3V input voltage there.

This approach solves both issues above - components are easy to find,

dirt-common and dirt-cheap, and are wired into default-OFF state, to only be

toggled into ON via whatever code conditions you put into that controller.

Simplest way, with an RP2040 running the usual micropython firmware,

would be to upload a main.py file of literally this:

import sys, machine

pins = dict(

(str(n), machine.Pin(n, machine.Pin.OUT, value=0))

for n in range(4) )

while True:

try: port, state = sys.stdin.readline().strip()

except ValueError: continue # not a 2-character line

if port_pin := pins.get(port):

print(f'Setting port {port} state = {state}')

if state == '0': port_pin.off()

elif state == '1': port_pin.on()

else: print('ERROR: Port state value must be "0" or "1"')

else: print(f'ERROR: Port {port} is out of range')

And now sending trivial "<port><0-or-1>" lines to /dev/ttyACM0 will

toggle the corresponding pins 0-3 on the board to 0 (off) or 1 (on) state,

along with USB hub ports connected to those, while otherwise leaving ports

default-disabled.

From a linux machine, serial terminal is easy to talk to by running mpremote

used with micropython fw (note - "mpremote run ..." won't connect stdin to tty),

screen /dev/ttyACM0 or many other tools, incl. just "echo" from shell scripts:

stty -F /dev/ttyACM0 raw speed 115200 # only needed once for device

echo 01 >/dev/ttyACM0 # pin/port-0 enabled

echo 30 >/dev/ttyACM0 # pin/port-3 disabled

echo 21 >/dev/ttyACM0 # pin/port-2 enabled

...

I've started with finding a D-Link PPPS hub, quickly bumped into above

limitations, and have been using this kind of solution instead for about

a year now, migrating from old arduino uno to rp2040 mcu and hooking up

a second 4-port hub recently, as this kind of control over USB peripherals

from bash scripts that actually use those devices turns out to be very convenient.

So can highly recommend to not even bother with PPPS hubs from the start,

and wire your own solution with whatever simple logic for controlling these

ports that you need, instead of a silly braindead way in that USB PPPS works.

An example of a bit more complicated control firmware that I use, with watchdog

timeout/pings logic on a controller (to keep device up only while script using

it is alive) and some other tricks can be found in mk-fg/hwctl repository

(github/codeberg or a local mirror).

Sep 05, 2023

Usually auto-generated names aim for being meaningful instead of distinct,

e.g. LEAFAL01A-P281, LEAFAN02A-P281, LEAFAL01B-P282, LEAFEL01A-P281,

LEAFEN01A-P281, etc, where single-letter diffs are common and decode to

something like different location or purpose.

Sometimes they aren't even that, and are assigned sequentially or by hash,

like in case of contents hashes, or interfaces/vlans/addresses in a network

infrastructure.

You always have to squint and spend time mentally decoding such identifiers,

as one letter/digit there can change whole meaning of the message, so working

with them is unnecessarily tiring, especially if a system often presents many of

those without any extra context.

Usual fix is naming things, i.e. assigning hostnames to separate hardware

platforms/VMs, DNS names to addresses, and such, but that doesn't work well

with modern devops approaches where components are typically generated with

"reasonable" but less readable naming schemes as described above.

Manually naming such stuff up-front doesn't work, and even assigning petnames

or descriptions by hand gets silly quickly (esp. with some churn in the system),

and it's not always possible to store/share that extra metadata properly

(e.g. on rebuilds in entirely different places).

Useful solution I found is hashing to an automatically generated petnames,

which seem to be kinda overlooked and underused - i.e. to hash the name

to an easily-distinct, readable and often memorable-enough strings:

- LEAFAL01A-P281 [ Energetic Amethyst Zebra ]

- LEAFAN02A-P281 [ Furry Linen Eagle ]

- LEAFAL01B-P282 [ Suave Mulberry Woodpecker ]

- LEAFEL01A-P281 [ Acidic Black Flamingo ]

- LEAFEN01A-P281 [ Prehistoric Raspberry Pike ]

Even just different length of these names makes them visually stand apart from

each other already, and usually you don't really need to memorize them in any way,

it's enough to be able to tell them apart at a glance in some output.

I've bumped into only one de-facto standard scheme for generating those -

"Angry Purple Tiger", with a long list of compatible implementations

(e.g. https://github.com/search?type=repositories&q=Angry+Purple+Tiger ):

% angry_purple_tiger LEAFEL01A-P281

acidic-black-flamingo

% angry_purple_tiger LEAFEN01A-P281

prehistoric-raspberry-pike

(default output is good for identifiers, but can use proper spaces and

capitalization to be more easily-readable, without changing the words)

It's not as high-entropy as "human hash" tools that use completely random words

or babble (see z-tokens for that), but imo wins by orders of magnitude in readability

and ease of memorization instead, and on the scale of names, it matters.

Since those names don't need to be stored anywhere, and can be generated

anytime, it is often easier to add them in some wrapper around tools and APIs,

without the need for the underlying system to know or care that they exist,

while making a world of difference in usability.

Honorable mention here to occasional tools like docker that have those already,

but imo it's more useful to remember about this trick for your own scripts

and wrappers, as that tends to be the place where you get to pick how to print

stuff, and can easily add an extra hash for that kind of accessibility.

Jan 26, 2023

As I kinda went on to replace a lot of silly long and insecure passwords with

FIDO2 USB devices - aka "yubikeys" - in various ways (e.g. earlier post about

password/secret management), support for my use-cases was mostly good:

Webauthn - works ok, and been working well for me with U2F/FIDO2 on various

important sites/services for quite a few years by now.

Wish it worked with NFC reader in Firefox on Linux Desktop too, but oh

well, maybe someday, if Mozilla doesn't implode before that.

Update 2024-02-21: fido2-hid-bridge seem to be an ok workaround for

this shortcoming, and other apps not using libfido2 with its pcscd support.

pam-u2f to login with the token using much simpler

and hw-rate-limited PIN (with pw fallback).

Module itself worked effortlessly, but had to be added to various pam services

properly, so that password fallback is available as well, e.g. system-local-login:

#%PAM-1.0

# system-login

auth required pam_shells.so

auth requisite pam_nologin.so

# system-auth + pam_u2f

auth required pam_faillock.so preauth

# auth_err=ignore will try same string as password for pam_unix

-auth [success=2 authinfo_unavail=ignore auth_err=ignore] pam_u2f.so \

origin=pam://my.host.net authfile=/etc/secure/pam-fido2.auth \

userpresence=1 pinverification=1 cue

auth [success=1 default=bad] pam_unix.so try_first_pass nullok

auth [default=die] pam_faillock.so authfail

auth optional pam_permit.so

auth required pam_env.so

auth required pam_faillock.so authsucc

# auth include system-login

account include system-login

password include system-login

session include system-login

"auth" section is an exact copy of system-login and system-auth lines from the

current Arch Linux, with pam_u2f.so line added in the middle, jumping over

pam_unix.so on success, or ignoring failure result to allow for entered string

to be tried as password there.

Using Enlightenment Desktop Environment here, also needed to make a trivial

"include system-local-login" file for its lock screen, which uses

"enlightenment" PAM service by default, falling back to basic system-auth or

something like that, instead of system-local-login.

sk-ssh-ed25519 keys work out of the box with OpenSSH.

Part that gets loaded in ssh-agent is much less sensitive than the usual

private-key - here it's just a cred-id blob that is useless without FIDO2 token,

and even that can be stored on-device with Discoverable/Resident Creds,

for some extra security or portability.

SSH connections can easily be cached using ControlMaster / ControlPath /

ControlPersist opts in the client config, so there's no need to repeat touch

presence-check too often.

One somewhat-annoying thing was with signing git commits - this can't be

cached like ssh connections, and doing physical ack on every git commit/amend

is too burdensome, but fix is easy too - add separate ssh key just for signing.

Such key would naturally be less secure, but not as important as an access key anyway.

Github supports adding "signing" ssh keys that don't allow access,

but Codeberg (and its underlying Gitea) currently does not - access keys

can be marked as "Verified", but can't be used for signing-only on the account,

which will probably be fixed, eventually, not a huge deal.

Early-boot LUKS / dm-crypt disk encryption unlock with offline key and a

simpler + properly rate-limited "pin", instead of a long and hard-to-type passphrase.

systemd-cryptenroll can work for that, if you have typical "Full Disk Encryption"

(FDE) setup, with one LUKS-encrypted SSD, but that's not the case for me.

I have more flexible LUKS-on-LVM setup instead, where some LVs are encrypted

and needed on boot, some aren't, some might have fscrypt, gocryptfs, some

other distro or separate post-boot unlock, etc etc.

systemd-cryptenroll does not support such use-case well, as it generates and

stores different credentials for each LUKS volume, and then prompts for

separate FIDO2 user verification/presence check for each of them, while I need

something like 5 unlocks on boot - no way I'm doing same thing 5 times, but

it is unavoidable with such implementation.

So had to make my own key-derivation fido2-hmac-boot tool for this,

described in more detail separately below.

Management of legacy passwords, passphrases, pins, other secrets and similar

sensitive strings of information - described in a lot more detail in an

earlier "FIDO2 hardware password/secret management" post.

This works great, required an (simple) extra binary, and integrating it into

emacs for my purposes, but also easy to setup in various other ways, and a lot

better than all alternatives (memory + reuse, plaintext somewhere, crappy

third-party services, paper, etc).

One notable problem with FIDO2 devices is that they don't really show what it

is you are confirming, so as a user, I can think that it wants to authorize

one thing, while whatever compromised code secretly requests something else

from the token.

But that's reasonably easy to mitigate by splitting usage by different

security level and rarity, then using multiple separate U2F/FIDO2 tokens for those,

given how tiny and affordable they are these days - I ended up having three of

them (so far!).

So using token with "ssh-git" label, you have a good idea what it'd authorize.

Aside from reasonably-minor quirks mentioned above, it all was pretty common

sense and straightforward for me, so can easily recommend migrating to workflows

built around cheap FIDO2 smartcards on modern linux as a basic InfoSec hygiene -

it doesn't add much inconvenience, and should be vastly superior to outdated

(but still common) practices/rituals involving passwords or keys-in-files.

Given how all modern PC hardware has TPM2 chips in motherboards, and these can

be used as a regular smartcard via PKCS#11 wrapper, they might also be a

somewhat nice malware/tamper-proof cryptographic backend for various use-cases above.

From my perspective, they seem to be strictly inferior to using portable FIDO2

devices however:

Soldered on the motherboard, so can't be easily used in multiple places.

Will live/die, and have to be replaced with the motherboard.

Non-removable and always-accessible, holding persistent keys in there.

Booting random OS with access to this thing seem to be a really bad idea,

as ideally such keys shouldn't even be physically connected most of the time,

especially to some random likely-untrustworthy software.

There is no physical access confirmation mechanism, so no way to actually

limit it - anything getting ahold of the PIN is really bad, as secret keys can

then be used freely, without any further visibility, rate-limiting or confirmation.

Motherboard vendor firmware security has a bad track record, and I'd rather

avoid trusting crappy code there with anything extra. In fact, part of the

point with having separate FIDO2 device is to trust local machine a bit less,

if possible, not more.

So given that grabbing FIDO2 device(s) is an easy option, don't think TPM2 is

even worth considering as an alternative to those, for all the reasons above,

and probably a bunch more that I'm forgetting at the moment.

Might be best to think of TPM2 to be in the domain and managed by the OS vendor,

e.g. leave it to Windows 11 and Microsoft SSO system to do trusted/measured

boot and store whatever OS-managed secrets, being entirely uninteresting and

invisible to the end-user.

As also mentioned above, least well-supported FIDO2-backed thing for me was

early-boot dm-crypt / LUKS volume init - systemd-cryptenroll requires

unlocking each encrypted LUKS blkdev separately, re-entering PIN and re-doing

the touch thing multiple times in a row, with a somewhat-uncommon LUKS-on-LVM

setup like mine.

But of course that's easily fixable, having following steps with a typical

systemd init process:

Starting early on boot or in initramfs, Before=cryptsetup-pre.target, run

service to ask for FIDO2 token PIN via systemd-ask-password, then use that

with FIDO2 token and its hmac-secret extension to produce secure high-entropy

volume unlock key.

If PIN or FIDO2 interaction won't work, print error and repeat the query,

or exit if prompt is cancelled to fallback to default systemd passphrase

unlocking.

Drop that key into /run/cryptsetup-keys.d/ dir for each volume that it

needs to open, with whatever extra per-volume alterations/hashing.

Let systemd pass cryptsetup.target, where systemd-cryptsetup will

automatically lookup volume keys in that dir and use them to unlock devices.

If any keys won't work or missing, systemd will do the usual passphrase-prompting

and caching, so there's always a well-supported first-class fallback unlock-path.

Run early-boot service to cleanup after cryptsetup.target,

Before=sysinit.target, to remove /run/cryptsetup-keys.d/ directory,

as everything should be unlocked by now and these keys are no longer needed.

I'm using common dracut initramfs generator with systemd here, where it's

easy to add a custom module that'd do all necessary early steps outlined above.

fido2_hmac_boot.nim implements all actual asking and FIDO2 operations, and can

be easily run from an initramfs systemd unit file like this (fhb.service):

[Unit]

DefaultDependencies=no

Wants=cryptsetup-pre.target

# Should be ordered same as stock systemd-pcrphase-initrd.service

Conflicts=shutdown.target initrd-switch-root.target

Before=sysinit.target cryptsetup-pre.target cryptsetup.target

Before=shutdown.target initrd-switch-root.target systemd-sysext.service

[Service]

Type=oneshot

RemainAfterExit=yes

StandardError=journal+console

UMask=0077

ExecStart=/sbin/fhb /run/initramfs/fhb.key

ExecStart=/bin/sh -c '\

key=/run/initramfs/fhb.key; [ -e "$key" ] || exit 0; \

mkdir -p /run/cryptsetup-keys.d; while read dev line; \

do cat "$key" >/run/cryptsetup-keys.d/"$dev".key; \

done < /etc/fhb.devices; rm -f "$key"'

With that fhb.service file and compiled binary itself installed via

module-setup.sh in the module dir:

#!/bin/bash

check() {

require_binaries /root/fhb || return 1

return 255 # only include if asked for

}

depends() {

echo 'systemd crypt fido2'

return 0

}

install() {

# fhb.service starts binary before cryptsetup-pre.target to create key-file

inst_binary /root/fhb /sbin/fhb

inst_multiple mkdir cat rm

inst_simple "$moddir"/fhb.service "$systemdsystemunitdir"/fhb.service

$SYSTEMCTL -q --root "$initdir" add-wants initrd.target fhb.service

# Some custom rules might be relevant for making consistent /dev symlinks

while read p

do grep -qiP '\b(u2f|fido2)\b' "$p" && inst_rules "$p"

done < <(find /etc/udev/rules.d -maxdepth 1 -type f)

# List of devices that fhb.service will create key for in cryptsetup-keys.d

# Should be safe to have all "auto" crypttab devices there, just in case

while read luks dev key opts; do

[[ "${opts//,/ }" =~ (^| )noauto( |$) ]] && continue

echo "$luks"

done <"$dracutsysrootdir"/etc/crypttab >"$initdir"/etc/fhb.devices

mark_hostonly /etc/fhb.devices

}

Module would need to be enabled via e.g. add_dracutmodules+=" fhb "

in dracut.conf.d, and will include the "fhb" binary, service file to run it,

list of devices to generate unlock-keys for in /etc/fhb.devices there,

and any udev rules mentioning u2f/fido2 from /etc/udev/rules.d, in case

these might be relevant for consistent device path or whatever other basic

device-related setup.

fido2_hmac_boot.nim "fhb" binary can be built (using C-like Nim compiler) with

all parameters needed for its operation hardcoded via e.g. -d:FHB_CID=...

compile-time options, to avoid needing to bother with any of those in systemd

unit file or when running it anytime on its own later.

It runs same operation as fido2-assert tool, producing HMAC secret for

specified Credential ID and Salt values.

Credential ID should be created/secured prior to that using related fido2-token

and fido2-cred binaries. All these tools come bundled with libfido2.

Since systemd doesn't nuke /run/cryptsetup-keys.d by default

(keyfile-erase option in crypttab can help, but has to be used consistently

for each volume), custom unit file to do that can be added/enabled to main

systemd as well:

[Unit]

DefaultDependencies=no

Conflicts=shutdown.target

After=cryptsetup.target

[Service]

Type=oneshot

ExecStart=rm -rf /run/cryptsetup-keys.d

[Install]

WantedBy=sysinit.target

And that should do it for implementing above early-boot unlocking sequence.

To enroll the key produced by "fhb" binary into LUKS headers, simply run it,

same as early-boot systemd would, and luksAddKey its output.

Couple additional notes on all this stuff:

HMAC key produced by "fhb" tool is a high-entropy uniformly-random 256-bit

(32B) value, so unlike passwords, does not actually need any kind of KDF

applied to it - it is the key, bruteforcing it should be about as infeasible

as bruteforcing 128/256-bit master symmetric cipher key (and likely even harder).

Afaik cryptsetup doesn't support disabling KDF for key-slot entirely,

but --pbkdf pbkdf2 --pbkdf-force-iterations 1000 options can be used to

set fastest parameters and get something close to disabling it.

cryptsetup config --key-slot N --priority prefer can be used to make

systemd-cryptsetup try unlocking volume with this no-KDF keyslot quickly first,

before trying other slots with memory/cpu-heavy argon2id and such proper PBKDF,

which should almost always be a good idea to do in this order, as it should

take almost no time to try 1K-rounds PBKDF2 slot.

Ideally each volume should have its own sub-key derived from one that fhb

outputs, e.g. via simple HMAC-SHA256(volume-uuid, key=fhb.key) operation,

which is omitted here for simplicitly.

fhb binary includes --hmac option for that, to use instead of "cat" above:

fhb --hmac "$key" "$dev" /run/cryptsetup-keys.d/"$dev".key

Can be added to avoid any of LUKS keys/keyslots being leaked or broken (for

some weird reason) to have any effect on other keys - reversing such HMAC back

to fhb.key to use it for other volumes would still be cryptographically infeasible.

Custom fido2_hmac_boot.nim binary/code used here is somewhat similar to an

earlier fido2-hmac-desalinate.c that I use for password management (see above),

but a bit more complex, so is written in an easier and much nicer/safer language

(Nim), while still being compiled through C to pretty much same result.

Jan 08, 2023

I've recently started using git notes as a good way to track metadata

associated with the code that's likely of no interest to anyone else,

and would only litter git-log if was comitted and tracked in the repo

as some .txt file.

But that doesn't mean that they shouldn't be backed-up, shared and merged

between different places where you yourself work on and use that code from.

Since I have a git mirror on my own host (as you do with distributed scm),

and always clone from there first, adding other "free internet service" remotes

like github, codeberg, etc later, it seems like a natural place to push such

notes to, as you'd always pull them from there with the repo.

That is not straightforward to configure in git to do on basic "git push"

however, because "push" operation there works with "[<repository> [<refspec>...]]"

destination concept.

I.e. you give it a single remote for where to push, and any number of specific

things to update as "<src>[:<dst>]" refspecs.

So when "git push" is configured with "origin" having multiple "url =" lines

under it in .git/config file (like home-url + github + codeberg), you don't get

to specify "push main+notes to url-A, but only main to url-B" - all repo URLs

get same refs, as they are under same remote.

Obvious fix conceptually is to run different "git push" commands to different

remotes, but that's a hassle, and even if stored as an alias, it'd clash with

muscle memory that'll keep typing "git push" out of habit.

Alternative is to maybe override git-push command itself with some alias, but git

explicitly does not allow that, probably for good reasons, so that's out as well.

git-push does run hooks however, and those can do the extra pushes depending on

the URL, so that's an easy solution I found for this:

#!/bin/dash

set -e

notes_remote=home

notes_url=$(git remote get-url "$notes_remote")

notes_ref=$(git notes get-ref)

push_remote=$1 push_url=$2

[ "$push_url" = "$notes_url" ] || exit 0

master_push= master_oid=$(git rev-parse master)

while read local_ref local_oid remote_ref remote_oid; do

[ "$local_oid" = "$master_oid" ] && master_push=t && break || continue

done

[ -n "$master_push" ] || exit 0

echo "--- notes-push [$notes_remote]: start -> $notes_ref ---"

git push --no-verify "$notes_remote" "$notes_ref"

echo "--- notes-push [$notes_remote]: success ---"

That's a "pre-push" hook, which pushes notes-branch only to "home" remote,

when running a normal "git push" command to a "master" branch (to be replaced

with "main" in some repos).

Idea is to only augment "normal" git-push, and don't bother running this on every

weirder updates or tweaks, keeping git-notes generally in sync between different

places where you can use them, with no cognitive overhead in a day-to-day usage.

As a side-note - while these notes are normally attached to commits, for

something more global like "my todo-list for this project" not tied to specific

ref that way, it's easy to attach it to some descriptive tag like "todo", and

use with e.g. git notes edit todo, and track in the repo as well.